Harness Engineering

The golden zone of team augmentation

The world of harness engineering continues to evovle. We've stopped talking about "AI assistants." We've stopped talking about "copilots." We are chasing team augmentation: how many full-time teammates can one person credibly stand in for?

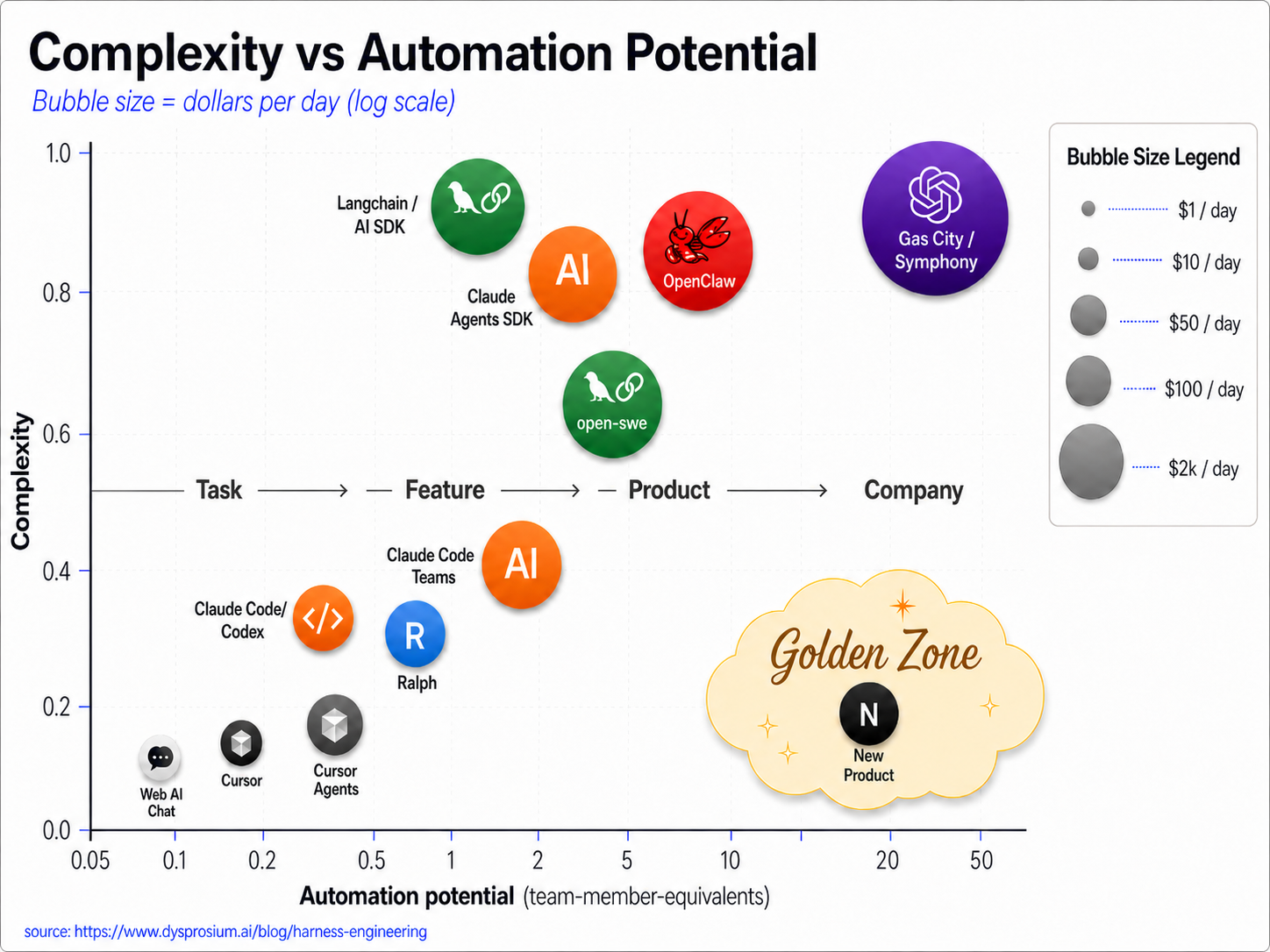

I've been thinking about the tools in the ecosystem on two axes: how much automation potential a tool delivers (measured in team-member-equivalents — what would it take in human headcount to do the same throughput?), and how much complexity it imposes on the operator. The third dimension, on a log scale because the spread is enormous, is what it costs per day to actually run.

Here’s what I came up with:

chasing leverage

As we push to the right, chasing additional productivity, we start with products that are not augmenting a team. Cursor, Cursor Agents, Claude Code, Codex — these are excellent tools, but they're augmenting you, the individual. They sit at 0.2 to 0.4 team-members. That's the difference between "I'm faster" and "I replaced a hire." The complexity at this phase is minimal, you install something and use it, its pre-packaged software.

Next comes a more complex setup. This is the LangChain/AI SDK, open-swe evolution. These are higher complexity due to the setup cost, more automation per dollar, and it takes additional time/energy/knowledge before you got a single useful agent run. OpenClaw and the Claude Agents SDK live near here too. The output is real, sometimes excellent, but the operator tax is high. Are you augmenting a team or becoming a platform engineer for your own augmentation?

All the way up are the tokenmaxxing approaches. These are more complex to understand what the tools even were, you have to learn the semantics of the system and set it up before work on your problem beings. Gas City and Symphony-style setups are the canonical example: ~20 team-members of throughput, and according to Ryan Lopopolo at OpenAI $2,000+ per day in inference. The complexity is high (luckily here Ryan L and team or Steve Yegge [Gas Town] has built the orchestration). You’re giving up more control of your codebase (another possible axis in the diagram here) with the promise of a team to do your bidding.

Personally I’m exploring up and to the right and in a future post I’ll take open-swe for a test drive.

The golden zone

The golden zone is the optimization of all three: lots of output, minimal complexity, reasonable token spend. A human can install the package, write the spec, hit go, walk away and theres a well built product without 10k in tokens to get there.

You’re correct to point out that coding was never the only hard part, building a product also includes:

understanding the problem/market/user

architecting for scale/security/speed

making it usable and powerful at the same tiem

and the rest of the SDLC

So it may be that what keeps us out of this zone is not about code generation at all, but the fact the other parts of the whole product process get in the way, and those need to be improved. Ralph for Product Management anyone, keep asking the customer what their problem is, until they hang up the phone.

how we get to the golden zone

Bag of ideas:

The path forward isn't more sophisticated agent frameworks; it's tighter, dumber, more relentless loops with better-defined success criteria and more cost efficient specific models. Ralph on my friends.

Token costs come down because inner-loop Coding models don’t need to know who won the 1964 World Series (Beckett keeps telling me its the Cardinals), they just needs to know Python. Using an ensemble of these models with correct orchestration drives efficiency.

The complexity drops because you stop architecting and start specifying. You write the eval, you write the goal, you press go. The operator's job becomes defining what "done" looks like. Token costs stay reasonable because each loop iteration is cheap with the right models, you only pay for the convergence. You just give up control and caring about the code artifact, let Claude take the wheel.

When you have a human team working together for 3 years, the efficiency is off the charts. Teamwork makes the dream work. In agent town Skill files and durable context win. SKILL.md, AGENTS.md, persistent memory, scoped contexts collapse setup cost dramatically. If the agent shows up already knowing your stack, your conventions, your test patterns, your deployment quirks, then "complex setup" stops being a moat and starts being a one-time cost amortized across every future task. Ba

Complexity shift

So who gets the complexity?

Less for the operator. Dramatically less. The whole golden zone bet is that the person sitting at the keyboard does less plumbing, not more.

More for the system. Loops need good evals. Skill files need maintenance. Invisible orchestration needs routing logic, fallback handling, cost controls. The complexity doesn't disappear though, it migrates from the operator's lap to the tool's internals, where it belongs. How much control do you have/do you want in this great power/great responsibility world however? We love to tweak our environments, processes, stack, languages etc.. Now you’re into the harness-customization game.

The arc is clear:

We started with coding → autocomplete, then chat-based code generation. Cursor and Claude Code own this.

We moved to designing-and-coding → the operator architects, the agent implements. Claude Code Teams, Cursor Agents.

The golden zone is planning-designing-architecting-coding-testing → the operator specifies intent and acceptance criteria, the system handles everything else.

Can one person stand in for a team of ten? Are we willing and able to let go of the the current model?

If you’ve used Lovable, it feels pretty awesome when you get started. Chat to an agent, it builds as you go, you put credits into the machine and out comes working code. Deploy it to their cloud, hook up some DNS and boom (this took me under an hour https://agentstomp.ai/). As complexity gets to enterprise grade, it becomes not worth the energy and time spent to agent-splain complex business requirements, multi-tenant SaaS needs, Permissions systems or items requiring complex architecture. This is where the harness-engineering approach has to shine. Its slower to get started, but as you embrace complexity, throwing a team of specialists at hard problems has to deliver the goods.

Next month there will be another leap forward, the question is, can we get to the “Golden Zone” of quick to build, token efficient complete working systems, or do we not get to have our cake and eat it too?